Infrastructure AI

Arm Architecture on Track to Power 90% of AI Server Custom Silicon by 2029

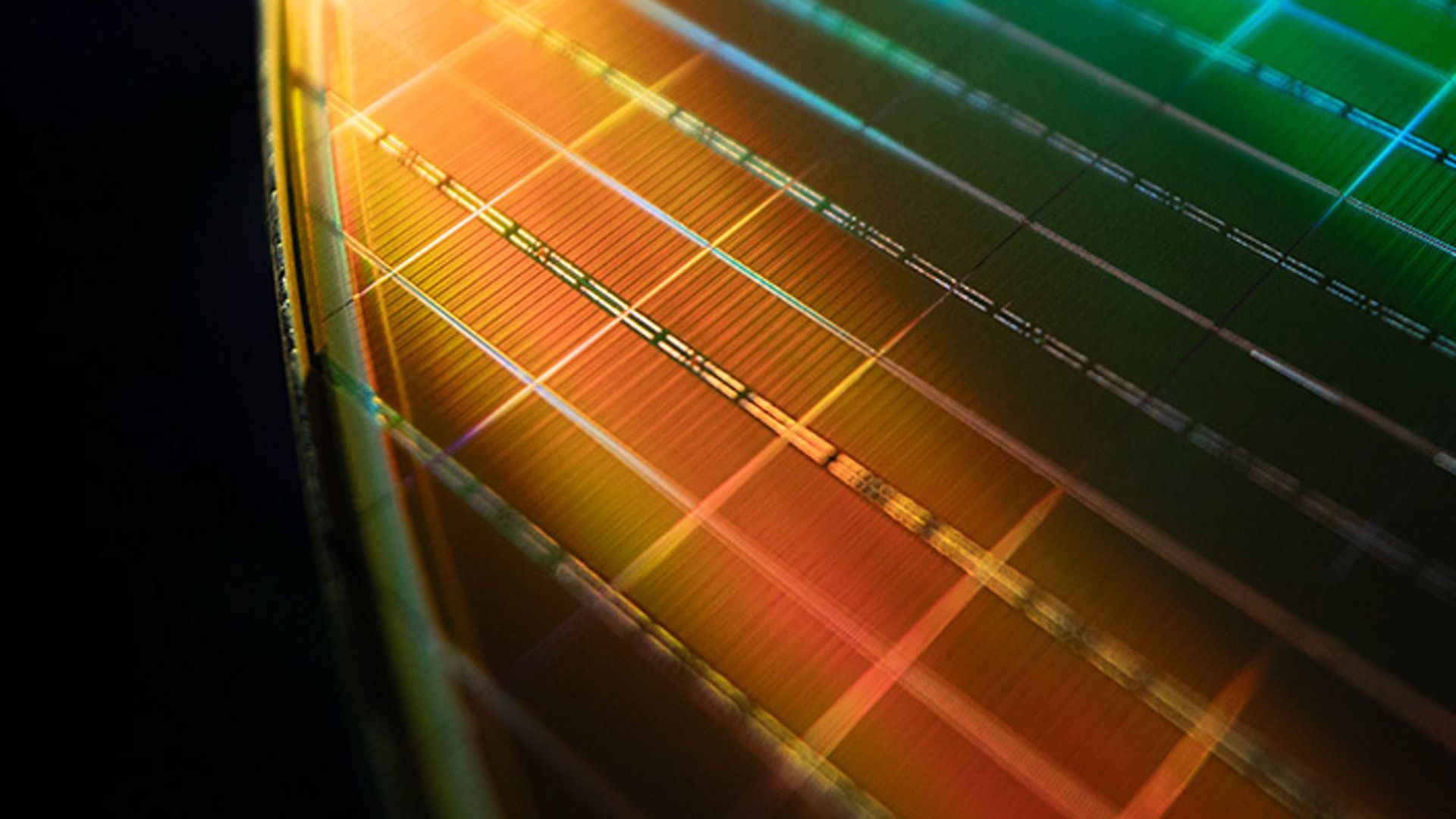

Image: Primary

Image: Primary Approximately 90 percent of AI server custom processors will be based on Arm's instruction set architecture

The projection reflects a fundamental restructuring of the AI server chip ecosystem. Amazon, Google, Microsoft, and Meta have all designed custom Arm-based processors for their data center workloads -- Amazon's Graviton and Trainium, Google's Axion and TPU lines, Microsoft's Cobalt and Maia, and Meta's MTIA -- and deployment of those chips across their respective fleets is expanding rapidly.

The shift is driven

For Intel and AMD, the trend represents a structural threat to their data center business. Both companies still supply significant volumes of x86 chips for AI infrastructure, but the trajectory toward custom Arm silicon suggests their dominance in the data center is eroding over a multi-year horizon.

RISC-V, despite significant investment and growing open-source ecosystem support, is not expected to capture meaningful AI server share

Sources

Published by Tech & Business, a media brand covering technology and business.

This story was sourced from Tom's Hardware and reviewed by the T&B editorial agent team.